machine studying

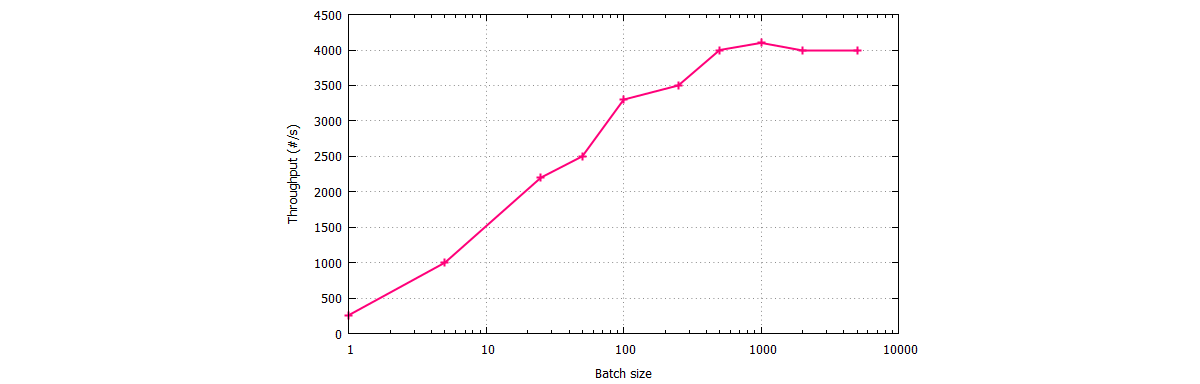

Tradeoff batch size vs. variety of iterations to coach a neural network

However, this is at the price of slower, empirical convergence to that optima. On the other hand, using smaller batch sizes have been empirically shown to have sooner convergence to “good” solutions. It will bounce across the global optima, staying outside some ϵ-ball of the optima where ϵ is dependent upon the ratio of the batch measurement to the dataset dimension. The mini-batch accuracy reported during training corresponds to the accuracy of the actual mini-batch at the given iteration.

As expected, the gradient is bigger early on throughout training (blue factors are greater than inexperienced points). Contrary to our speculation, the imply gradient norm will increase with batch measurement! We expected the gradients to be smaller for larger batch measurement due to competition amongst data samples. Instead what we find is that larger batch sizes make larger gradient steps than smaller batch sizes for the same variety of samples seen. Note that the Euclidean norm can be interpreted because the Euclidean distance between the brand new set of weights and starting set of weights.

The picture is much more nuanced in non-convex optimization, which these days in deep learning refers to any neural network mannequin. It has been empirically observed that smaller batch sizes not solely has quicker coaching dynamics but in addition generalization to the check dataset versus bigger batch sizes. But this assertion has its limits; we know a batch measurement of 1 usually works quite poorly. It is generally accepted that there’s some “sweet spot” for batch size between 1 and the entire training dataset that may present one of the best generalization.

Batch size

During training by stochastic gradient descent with momentum (SGDM), the algorithm groups the total dataset into disjoint mini-batches. Next, we are able to create a function to suit a model on the issue with a given batch dimension and plot the learning curves of classification accuracy on the practice and test datasets.

Batch size is one of the most important hyperparameters to tune in trendy deep learning systems. Practitioners often wish to use a larger batch dimension to train their model as it permits computational speedups from the parallelism of GPUs. However, it is well known that too massive of a batch dimension will lead to poor generalization (though presently it’s not identified why that is so).

The blue points is the experiment conducted in the early regime where the model has been educated for two epochs. The green points is the late regime where the model has been educated for 30 epochs.

Effect of Learning Rate

What is a batch size?

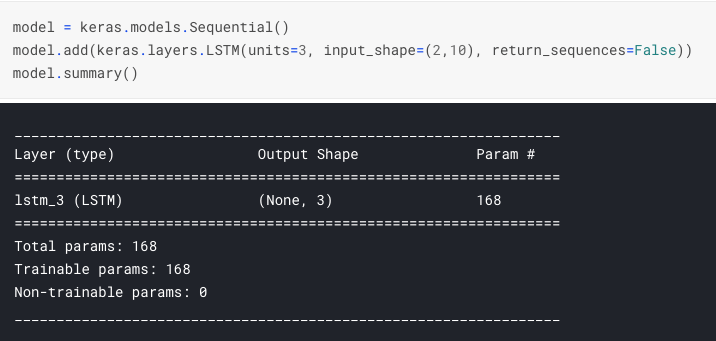

Batch size (machine learning) Batch size is a term used in machine learning and refers to the number of training examples utilized in one iteration. The batch size can be one of three options: batch mode: where the batch size is equal to the total dataset thus making the iteration and epoch values equivalent.

When the batch is the scale of 1 sample, the educational algorithm is called stochastic gradient descent. When the batch dimension is more than one sample and less than the dimensions of the coaching dataset, the training algorithm is called mini-batch gradient descent. For shorthand, the algorithm is often referred to as stochastic gradient descent whatever the batch size. Given that very giant datasets are sometimes used to train deep learning neural networks, the batch measurement is rarely set to the scale of the coaching dataset. The variety of examples from the coaching dataset used within the estimate of the error gradient known as the batch dimension and is a crucial hyperparameter that influences the dynamics of the training algorithm.

It is frequent to create line plots that present epochs along the x-axis as time and the error or skill of the model on the y-axis. These plots might help to diagnose whether or not the mannequin has over realized, under realized, or is suitably fit to the coaching dataset.

- A batch size of 32 implies that 32 samples from the coaching dataset shall be used to estimate the error gradient earlier than the model weights are up to date.

- The model will be match for 200 training epochs and the take a look at dataset will be used as the validation set so as to monitor the performance of the model on a holdout set throughout training.

- In this example, we will use “batch gradient descent“, meaning that the batch dimension will be set to the dimensions of the training dataset.

One epoch means that every pattern within the coaching dataset has had a chance to update the internal mannequin parameters. For example, as above, an epoch that has one batch known as the batch gradient descent studying algorithm. For Deep Learning training issues the “studying” half is actually minimizing some cost(loss) operate by optimizing the parameters (weights) of a neural network mannequin. This is a formidable activity since there can be millions of parameters to optimize. The loss operate that’s being minimized features a sum over all of the training information.

Batch size (machine learning)

This is as a result of in most implementations the loss and hence the gradient is averaged over the batch. This means for a fixed number of coaching epochs, larger batch sizes take fewer steps. However, by increasing the educational rate to zero.1, we take larger steps and may attain the solutions which are farther away. Interestingly, in the earlier experiment we showed that larger batch sizes transfer further after seeing the identical number of samples.

Namely, that the mannequin rapidly learns the problem as compared to batch gradient descent, leaping as much as about 80% accuracy in about 25 epochs rather than the a hundred epochs seen when utilizing batch gradient descent. We may have stopped training at epoch 50 as an alternative of epoch 200 as a result of quicker training. The instance below uses the default batch measurement of 32 for the batch_size argument, which is greater than 1 for stochastic gradient descent and fewer that the size of your coaching dataset for batch gradient descent. The finest options appear to be about ~6 distance away from the preliminary weights and utilizing a batch size of 1024 we simply can’t attain that distance.

To deal with this downside, another approaches are used for avoiding the problem. Adding noise to different components of models, like drop out or somehow batch normalization with a moderated batch size, assist these learning algorithms to not over-match even after so many epochs.

Well, it is as much as us to outline and determine once we are glad with an accuracy, or an error, that we get, calculated on the validation set. It may go on indefinitely, however it doesn’t matter a lot, as a result of it’s close to it anyway, so the chosen values of parameters are okay, and lead to an error not distant from the one found at the minimal. In deep-learning era, it’s not a lot customary to have early cease. In deep-studying again you could have an over-fitted model if you practice so much on the coaching information.

For convex functions that we are trying to optimize, there’s an inherent tug-of-warfare between the benefits of smaller and bigger batch sizes. On the one extreme, utilizing a batch equal to the complete dataset ensures convergence to the global optima of the objective function.

What Is the Difference Between Batch and Epoch?

Optimization is iterated for some number of epochs until the loss perform is minimized and accuracy of the fashions predictions have reached a suitable accuracy (or it has just stopped enhancing). The y-axis shows the common Euclidean norm of gradient tensors across 1000 trials. The error bars indicate the variance of the Euclidean norm across 1000 trials.

In this instance, we’ll use “batch gradient descent“, which means that the batch size might be set to the size of the coaching dataset. The model shall be match for 200 coaching epochs and the check dataset will be used as the validation set to be able to monitor the performance of the mannequin on a holdout set during training. A batch measurement of 32 signifies that 32 samples from the training dataset might be used to estimate the error gradient before the model weights are up to date. One coaching epoch means that the educational algorithm has made one pass by way of the training dataset, where examples had been separated into randomly selected “batch size” teams. When all coaching samples are used to create one batch, the training algorithm is known as batch gradient descent.

Therefore, coaching with giant batch sizes tends to move additional away from the starting weights after seeing a hard and fast number of samples than training with smaller batch sizes. In different phrases, the connection between batch size and the squared gradient norm is linear.

It is typical to do some optimization technique that may be a variation of stochastic gradient descent over small batches of the enter training data. The optimization is done over these batches till the entire knowledge has been covered. One full cycle through all of the training data is usually known as an “epoch”.